Action Segmentation with Joint Self-Supervised Temporal Domain Adaptation

Abstract

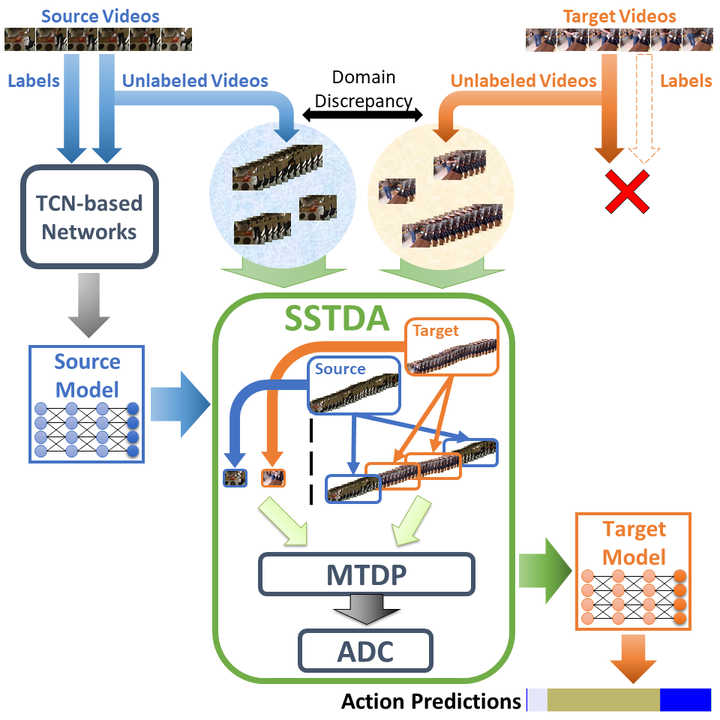

Despite the recent progress of fully-supervised action segmentation techniques, the performance is still not fully satisfactory. One main challenge is the problem of spatiotemporal variations (e.g. different people may perform the same activity in various ways). Therefore, we exploit unlabeled videos to address this problem by reformulating the action segmentation task as a cross-domain problem with domain discrepancy caused by spatio-temporal variations. To reduce the discrepancy, we propose Self-Supervised Temporal Domain Adaptation (SSTDA), which contains two self-supervised auxiliary tasks (binary and sequential domain prediction) to jointly align cross-domain feature spaces embedded with local and global temporal dynamics, achieving better performance than other Domain Adaptation (DA) approaches. On three challenging benchmark datasets (GTEA, 50Salads, and Breakfast), SSTDA outperforms the current state-of-the-art method by large margins (e.g. for the F1@25 score, from 59.6% to 69.1% on Breakfast, from 73.4% to 81.5% on 50Salads, and from 83.6% to 89.1% on GTEA), and requires only 65% of the labeled training data for comparable performance, demonstrating the usefulness of adapting to unlabeled target videos across variations.

Videos

Overview introduction with less technical details:

1-min talk video:

5-min talk video:

Resources

Other Links:

Citation

Min-Hung Chen, Baopu Li, Yingze Bao, Ghassan AlRegib, and Zsolt Kira, “Action Segmentation with Joint Self-Supervised Temporal Domain Adaptation”, IEEE Conference on Computer Vision and Pattern Recognition (CVPR), 2020.

BibTex

@inproceedings{chen2020action,

title={Action Segmentation with Joint Self-Supervised Temporal Domain Adaptation},

author={Chen, Min-Hung and Li, Baopu and Bao, Yingze and AlRegib, Ghassan and Kira, Zsolt},

booktitle={IEEE Conference on Computer Vision and Pattern Recognition (CVPR)},

year={2020}

}

Members

1Georgia Institute of Technology 2Baidu USA

*work done during an internship at Baidu USA

|  |  |  |  |

|---|